Customer Stories

Seamless Automation: Bringing the Power of AI Data Directly into Google Sheets

Details

Compay Name: Synterrix

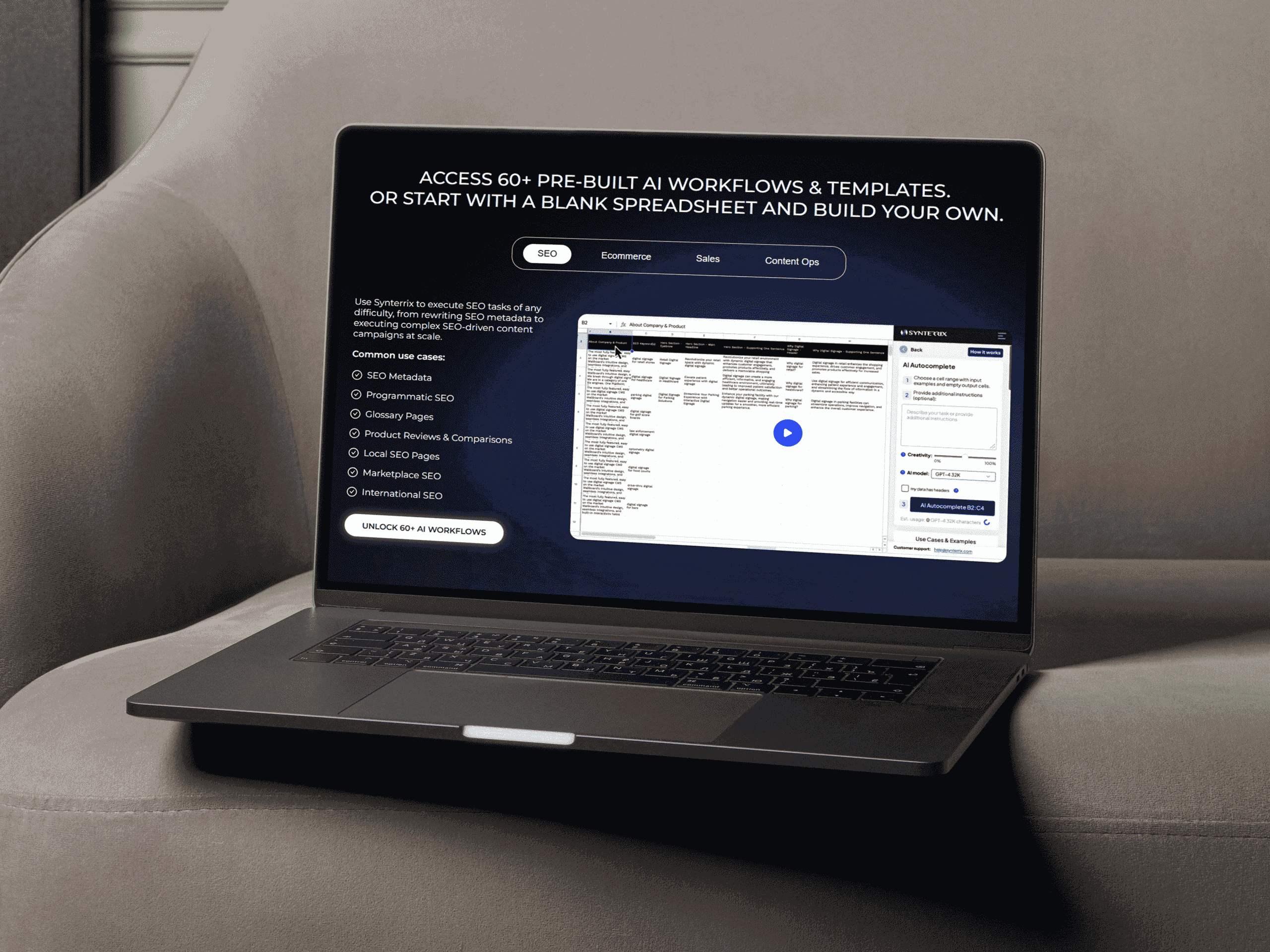

Synterrix is an innovative AI-powered spreadsheet platform, built to democratize automation and smart insights. Their platform empowers users to build, fine-tune, and deploy custom AI models and automate workflows, all within the familiar spreadsheet environment.

Why Choose Jyaba

Synterrix is advanced, but it faced a critical technical gap: fully automating its data pipeline from the web into Google Sheets. They needed a reliable solution to handle anti-bot protections and dynamic website content. Most importantly, this solution had to ensure real-time data cleaning and integration without sacrificing performance or scalability.

Results

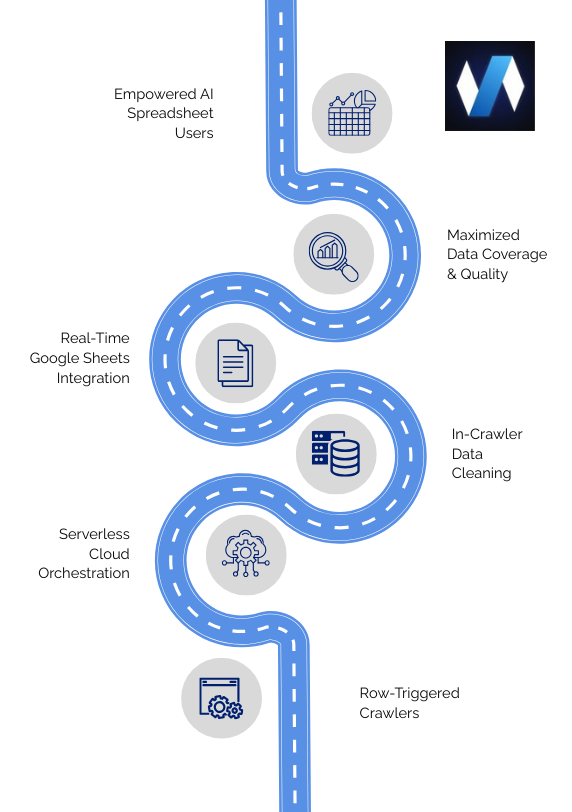

Jyaba partnered with Synterrix to design and implement a smart, serverless pipeline. This solution utilized cloud automation and advanced scraping techniques. Consequently, we transformed complex web data workflows into a seamless, user-friendly experience. Now, non-technical users can trigger complex data tasks directly from their spreadsheets.

Key Insights

→ Synterrix, an innovative AI-powered spreadsheet platform making automation and smart insights accessible to all users.

→ Synterrix specializes in advanced data analytics, machine learning, and scalable software, with expertise ranging from jurimetrics to market forecasting.

→ They faced challenges with low data coverage (due to anti-bot protections), dynamic website content, and the complex task of integrating real-time, cleaned data directly into Google Sheets for non-technical users.

→ Jyaba delivered a serverless, end-to-end pipeline that uses row-triggered crawlers (via Firebase/Pub/Sub) and in-crawler data cleaning within the Scrapy framework, ensuring maximized data coverage and instant sheet updates.

Challenges

Synterrix needed a robust solution to address several core technical hurdles. In short, these issues impacted the reliability and quality of their data pipelines.

Partial Coverage of Sites: Despite internal tools, advanced anti-bot and geo-restrictions limited successful scraping to only 80–90% of target websites. Therefore, this lack of coverage created unacceptable data gaps.

In-Crawler Data Cleaning: Structuring and cleaning data within the crawler’s performance window was a major hurdle. Furthermore, post-processing was slow, and messy data affected the reliability of Synterrix’s AI models.

Real-Time Integration with Sheets: Building a smooth, fast, and reliable pipeline between web crawlers and Google Sheets required a specialized, event-driven backend design. The system had to update instantly upon completion.

Dynamic Website Content: JavaScript-heavy pages and frequently changing structures challenged traditional scraping. Ultimately, this factor affected the consistency and quality of the extracted data.

Solutions

Jyaba designed and implemented a smart, serverless pipeline. The system automates the entire process—from crawling to cleaning and integrating data. This creates a frictionless experience for Synterrix users.

Row-Triggered Crawlers via Serverless Architecture: First, Jyaba configured the system so a new row in the user’s spreadsheet automatically triggers a dedicated crawl. This was achieved using a serverless approach leveraging Firebase and Pub/Sub. This design ensures efficiency and cost-effective scaling for the event-driven workflow.

In-Crawler Data Cleaning for Speed: To guarantee speed and consistency, we structured and cleaned the data directly within the Scrapy framework. This proactive approach ensures the data is instantly ready for analysis upon arrival at the spreadsheet. This also bypasses time-consuming post-processing steps.

Real-Time Sheet Updates: Cleaned, high-quality data is automatically pushed directly to the designated Google Sheets. As a result, teams have instant accessibility. Furthermore, this real-time synchronization allows non-technical users to access and utilize fresh web data without leaving their familiar spreadsheet environment.

Scalable Automation: Finally, the delivered solution is highly scalable. It efficiently handles dynamic websites and massive data volumes. This effectively solves the challenges of anti-bot protections and JavaScript-heavy content to maximize data coverage.

Success Metrics

The result was a scalable, secure, and high-performing scraping solution that turned complex government data into actionable business intelligence delivered accurately and on time.

%

Data Coverage Improvement

Time Saved per User per hour

%

System Uptime pipeline reliability

Data Delivered despite the Barriers

Through deep technical expertise, proactive planning, and advanced automation strategies, Jyaba helped Synterrix overcome some of the toughest data collection challenges.

AI-Powered Web Scraping: Using ChatGPT, LLMs, and Automation to Extract Smarter Data

AI-powered smart scraping is a game changer—using AI and ML to detect patterns, handle dynamic content, and clean messy data automatically.

E-commerce Data Scraping Services: How Jyaba Helps Unlock Actionable Insights

Jyaba’s e-commerce data scraping services deliver accurate, timely, and structured data from multiple online sources.

Crawlee JS vs Crawlee Python analysed based on speed and cost

compares performance, resource usage, and trade-offs to help you decide between Crawlee Python and Crawlee JavaScript for your scraping workloads.