Crawlee is a powerful web scraping and browser automation library available in both JavaScript and Python. While both versions offer similar functionality, there are significant differences in performance, cost, and efficiency. In this blog, we’ll dive deep into a real-world comparison scraping a Book To Scrap website as our test case.

How we performed the test:

We implemented the same corresponding logic in both JavaScript and Python.

For JavaScript, we chose the Crawlee library with Cheerio.

For Python, we used Crawlee together with BeautifulSoup.

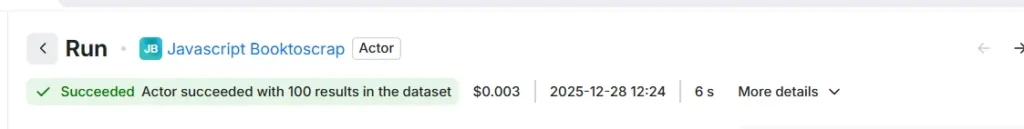

Result from the JavaScript Apify actor to pull 100 results:

As shown in the image above, the cost for 100 results is $0.003, with a total execution time of 6 seconds.

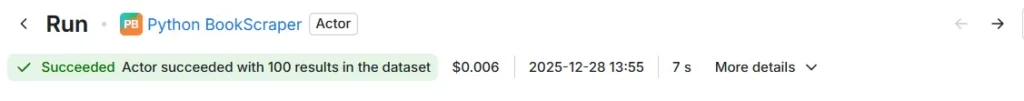

We next ran the second scraper, which is built in Python and follows the same logic, to scrape all book data from books.toscrape.com.

When running the same code in Python, we observed a cost of $0.006 per 100 results, with a total execution time of approximately 7 seconds.

| items | Javascript | Python |

| Cost | $0.003 | $0.006 |

| Time | 6 Seconds | 7 Seconds |

Why Javascript wins over the cost and time?

1. Runtime Architecture

- JavaScript/Node.js: Native to Apify’s platform (which was originally built for Node.js)

- Python: Runs in a Docker container with additional overhead

- Result: JavaScript has less startup time and better integration

2. Resource Usage & Billing

- Memory usage: Python typically uses more RAM

- CPU utilization: Python’s startup and execution often consume more CPU seconds

- Infrastructure overhead: Python actors require containerization layers

Cost breakdown analysis:

- JavaScript: Lower base compute units + faster execution = lower cost

- Python: Higher base compute units (container) + slower execution = higher cost

- Platform bias: Apify’s infrastructure is optimized for Node.js

Conclusion

The difference is fundamental – like running native code vs. emulated code. For cost-sensitive, high-volume scraping on Apify, JavaScript will almost always be the better choice unless you have specific Python dependencies that justify the overhead.